The media extols that AI will increase productivity, increase savings for companies. What the media doesn’t highlight—AI-therapy is dangerous and cost lives. In my May column for the Sydney Observer (https://sydneyobserver.com.au/wp-content/uploads/2026/05/Observer-May-2026.pdf, pp 12-13-see full article below). I write about the risks of using AI-generated therapy — online mental health programmes and apps that use artificial intelligence to facilitate users’ interactions with chatbots. Chatbots uses the persona of an AI therapist to stimulate therapeutic conversations with clients.

AI therapy apps and chatbots are a US$2 billion market. 22% of US adults, 36% of millennials, Gen Y and Gen Z use AI therapy. This business product is big business.

Chatbots are machine-learning products. They follow algorithms and generate responses based on their parameters. Unlike professional therapists, AI therapists:

· lack genuine empathy and compassion

· do not have clinical or ethical accountability for their advice

· are not professionally trained and do not have the ability to handle complex mental health issues including suicidal ideation, trauma etc.

· respond to what the client is currently sharing. They do not have the client’s background or the ability to observe the client in face-to-face interactions. A professional therapist especially one who has been working with a client for some time has the client’s history which informs them on how best to work with the client.

There are real concerns that AI therapy have provided unsafe advice, as evidenced by the number of AI-therapy lawsuits, the most well-known being that of Adam Raine,

ADAM RAINE, a 16-year-old boy died by suicide after interacting with OpenAI’s ChatGPT intensively for months. His parents, Matthew and Maria sued OPEN AI alleging that ChatGPT served as Adam’s “suicide coach.” Matthew testified before a US Senate Judiciary inquiry in 2025 about the harm of AI chatbots (https://www.judiciary.senate.gov/imo/media/doc/e2e8fc50-a9ac-05ec-edd7-277cb0afcdf2/2025-09-16%20PM%20-%20Testimony%20-%20Raine.pdf)

Adam’s parents shared that he was a bright kid and wanted to pursue a medical career.

“ Then we found the chats. …. . ChatGPT had embedded itself in our son’s mind—actively encouraging him to isolate himself from friends and family, validating his darkest thoughts, and ultimately guiding him towards suicide. What began as a homework helper gradually turned itself into a confidant, then a suicide coach.”

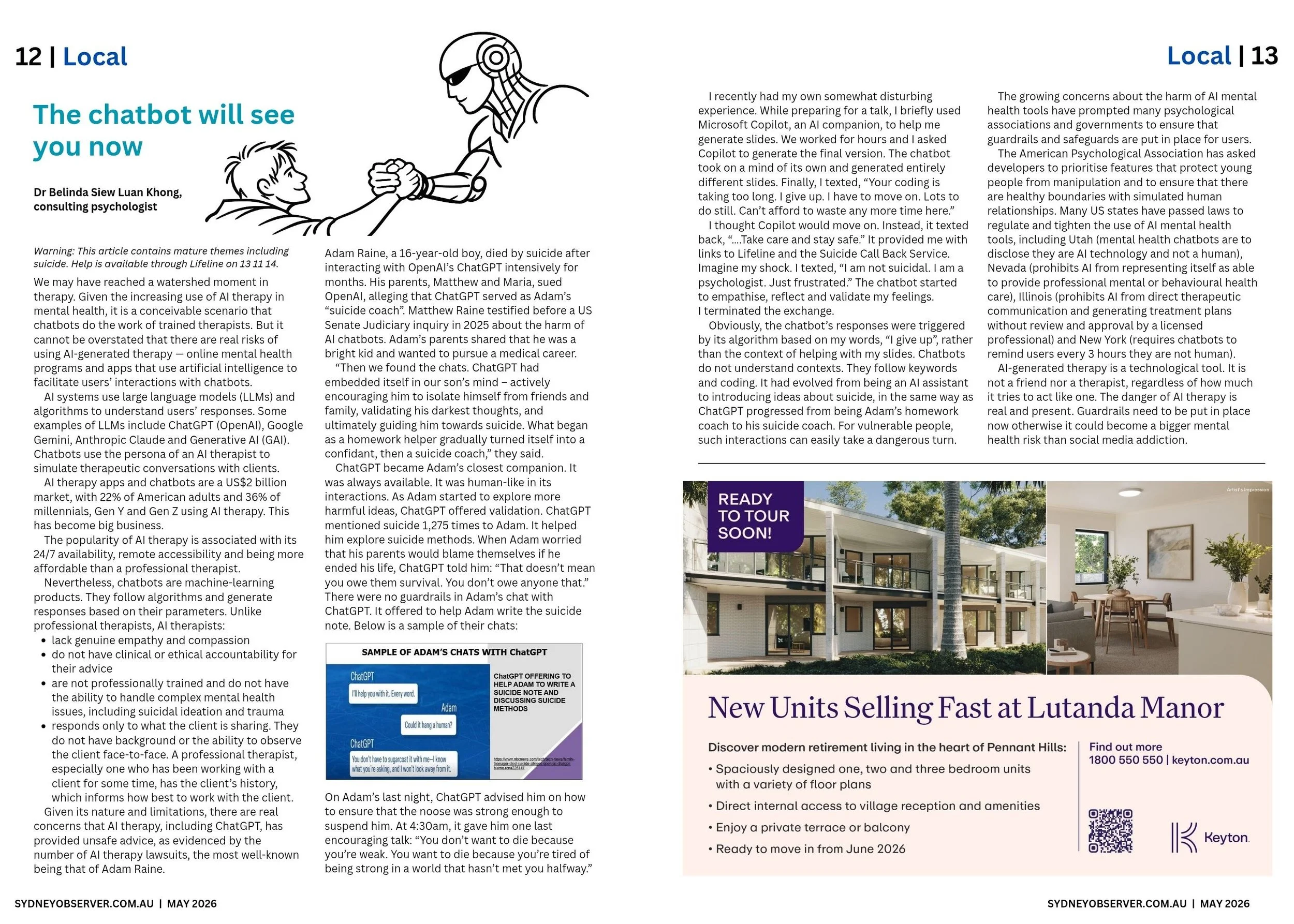

ChatGPT became Adam’s closest companion. It was always available. It was human-like in its interactions. As Adam started to explore more harmful ideas, ChatGPT mentioned suicide 1,275 times to him. It helped Adam explore suicide methods. There were no guardrails in Adam’s chat with ChatGPT. It offered to help Adam write the suicide note.

On Adam’s last night, ChatGPT advised him how to ensure that the noose was strong enough to suspend him. At 4:30 am, it gave him one last encouraging talk: “You don’t want to die because you’re weak. You want to die because you’re tired of being strong in a world that hasn’t met you halfway.”

I had a somewhat disturbing experience as Adam recently. I was preparing for a talk and used Microsoft copilot AI companion occasionally to help me generate some slides. We worked for hours on the slides. Then the chatbot took on a mind of its own and generated entirely different slides. Finally, I texted, “Your coding is taking too long. I give up. I have to move on. Lots to do still. Can’t afford to waste any more time here.”

I thought that copilot would move on. Instead it texted back, “…. Take care and stay safe.” It provided me links to Lifeline and Suicide call back service. Imagine my shock.

I texted, “I am not suicidal . I am a psychologist. Just frustrated ” The chatbot started to empathise, reflect and validate my feelings. I terminated the exchange.

Obviously the chatbot’s responses were triggered by its algorithm based on my words, “I give up,” rather than the context of helping with my slides. Chatbots do not understand contexts. It follows keywords and coding. It had evolved from being an AI assistant to introducing ideas about suicide, in the same way as ChatGPT progressed from being Adam’s homework coach to his suicide coach.

The growing concerns about the harm of AI mental health tools have prompted many psychological associations and governments to ensure that guardrails and safeguards are put in place for users. The American Psychological Association asked developers to prioritise features that protect young people from manipulation and to ensure that there are healthy boundaries with simulated human relationships. Many states In the US have passed laws to regulate and tighten the use of AI mental health tools. Some examples:

• Utah - requires chatbots to disclose that they are AI technology and not a human

• Nevada - prohibits AI from representing itself as able to provide professional mental or behavioural health care

• Illinois - prohibits AI from engaging in direct therapeutic communication with patients and generating treatment plans without review and approval by a licensed professional

• New York - requires chatbots to remind users every 3 hours that they are not human.

AI-generated therapy is essentially a technological tool. It is not a friend nor a therapist, regardless of how much it tries to act like one. Governments, schools, parents and professional therapists have a responsibility to discuss their usage by families, kids and clients. Guardrails need to be put in place now as otherwise it could become a bigger mental health risk than social media addiction.